Zhanpeng He

I am a postdoctoral scholar at Stanford University, working with Jiajun Wu and C. Karen Liu.

I obtained my PhD in Computer Science from Columbia University, where I was co-advised by Matei Ciocarlie and Shuran Song. I was a member of the Robotic Manipulation and Mobility (ROAM) Lab and the Columbia Artificial Intelligence and Robotics Lab (now REAL@Stanford).

Prior to Columbia, I received my master's degree from University of Southern California, where I worked with Gaurav Sukhatme.

Outside of research, I enjoy cooking, brewing coffee and playing with my dog Nova. Here is a picture of Nova being corrupted by Gaussian noises.

[Stanford] I am seeking highly motivated undergraduate and MS students for robot learning projects at SVL and/or the Movement Lab. If you are interested, please fill out this form and send me an email. For Stanford MS students and undergraduates, minimum commitment is 15 hours per week for 6 months.

[External] I welcome collaboration on interesting projects! Please feel free to contact me if you'd like to chat. However, due to my limited bandwidth, I may not be able to respond to all emails.

Research

I'm a full-stack roboticist -- I believe robotics must be solved through progress in many different integral components. While scaling up data collection is important, we also need to design the right hardware (e.g, manipulators and contact sensors) and learning algorithms (e.g., learning from imbalanced sensor data) to enable robust policies on real robots for contact-rich tasks. To this end, my research includes:

- Policy learning (e.g., imitation learning, reinforcement learning) for contact-rich tasks;

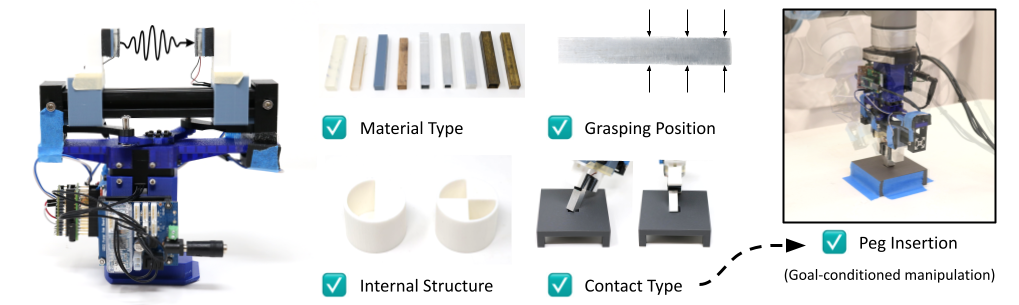

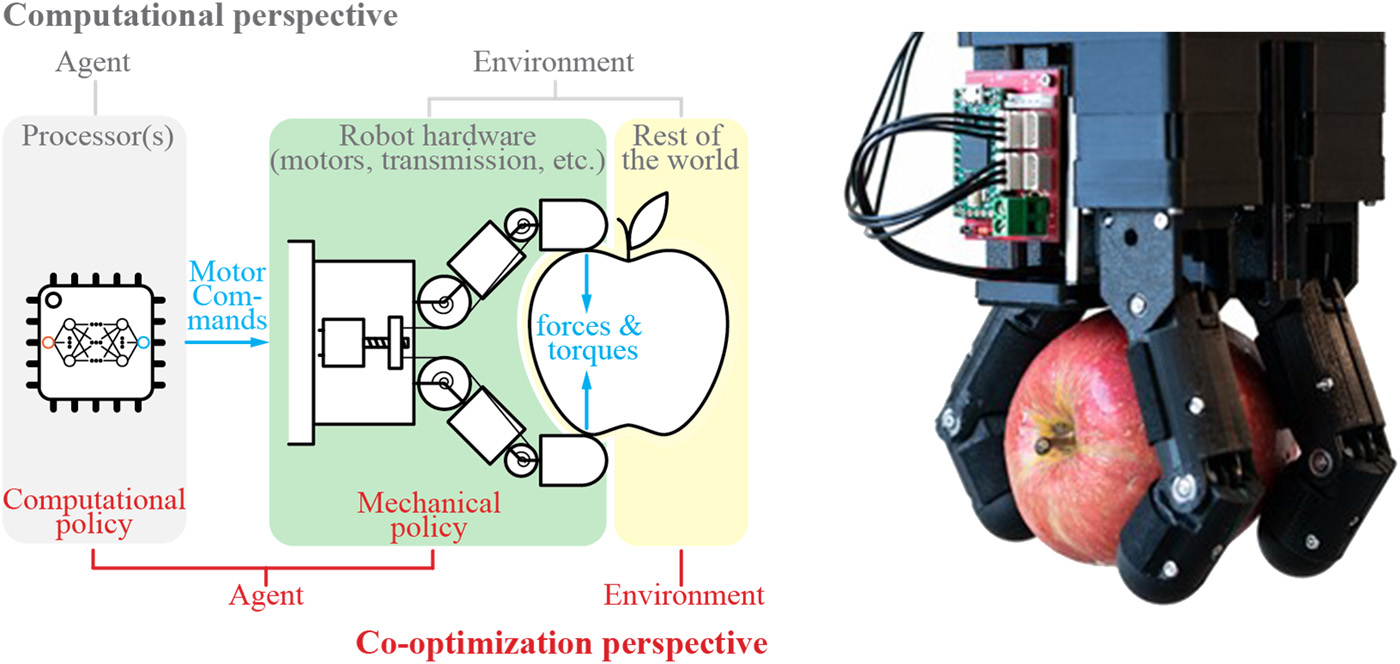

- Hardware design (e.g., manipulators, data collection systems, and contact sensors) and task-driven hardware optimization;

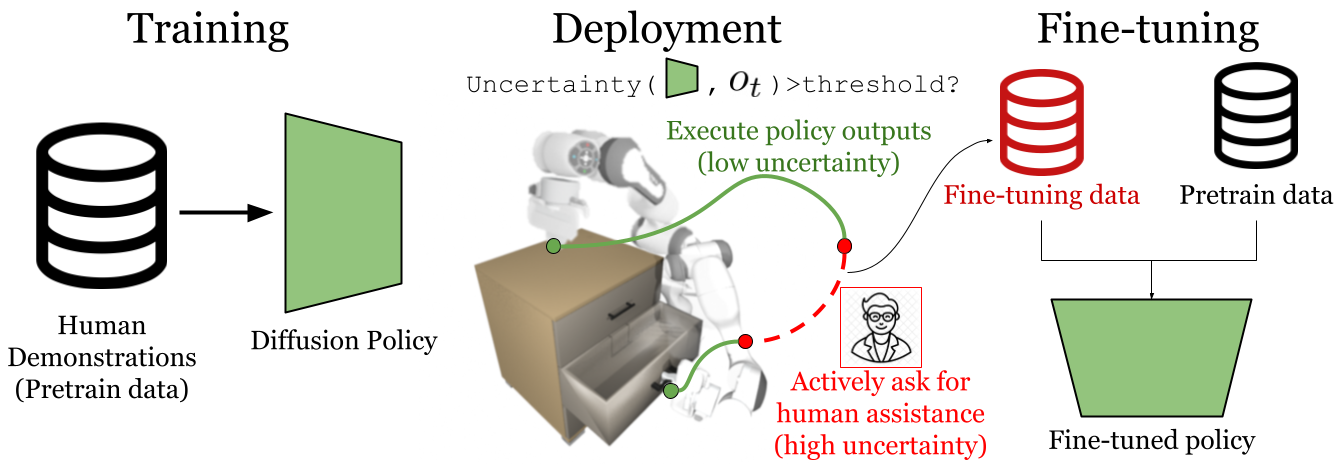

- Human-in-the-loop policy learning and deployment;

Publications (* - indicates equal contributions and joint-first authors)

Eric T. Chang*,

Peter Ballentine*,

Zhanpeng He*,

Do-gon Kim,

Kai Jiang,

Hua-Hsuan Liang,

Joaquin Palacios,

William Wang,

Ioannis Kymissis,

Matei Ciocarlie

Sharfin Islam*,

Zewen Chen*,

Zhanpeng He*,

Swapneel Bhatt,

Andres Permuy,

Brock Taylor,

James Vickery,

Zhengbin Lu,

Cheng Zhang,

Pedro Piacenza,

Matei Ciocarlie

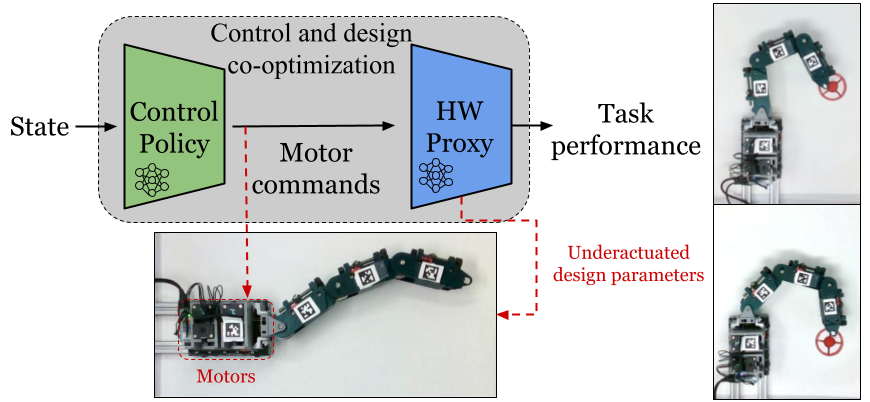

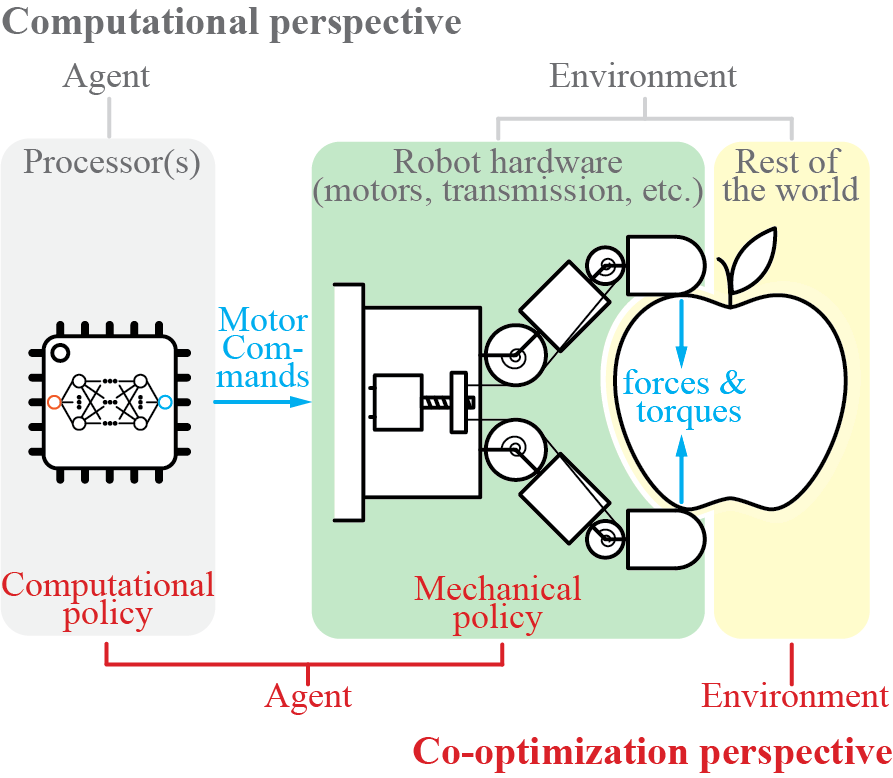

Zhanpeng He*,

Yifeng Cao*,

Matei Ciocarlie

Reginald McLean,

Evangelos Chatzaroulas,

Luc McCutcheon,

Frank Röder,

Tianhe Yu,

Zhanpeng He,

KR Zentner,

Ryan Julian,

JK Terry,

Isaac Woungang,

Nariman Farsad,

Pablo Samuel Castro

Do-Gon Kim*,

Kaidi Zhang*,

Hua-Hsuan Liang,

Eric T Chang,

Zhanpeng He,

Ioannis Kymissis,

Matei Ciocarlie

Sharfin Islam*,

Zhanpeng He*,

Matei Ciocarlie

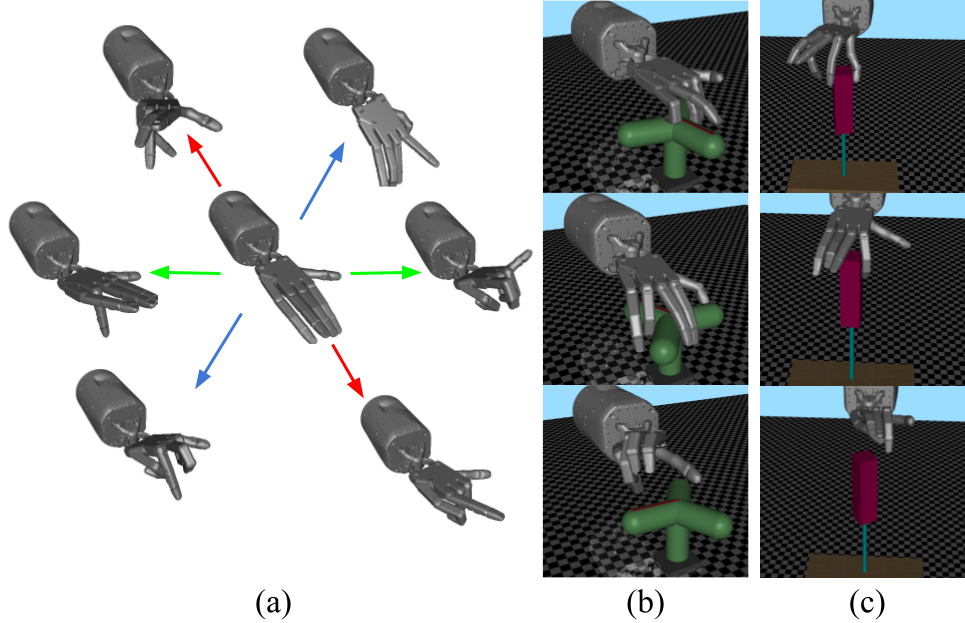

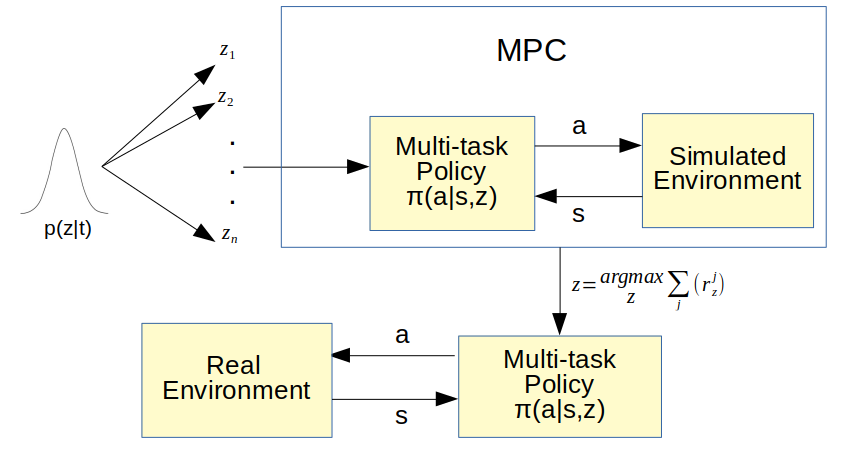

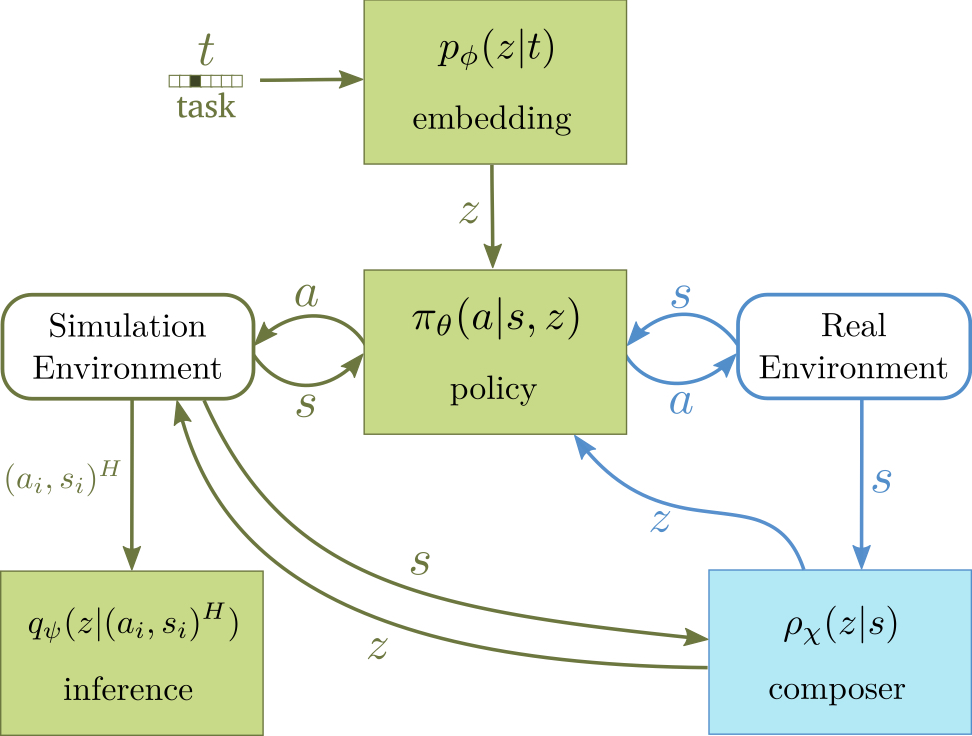

Zhanpeng He,

Matei Ciocarlie

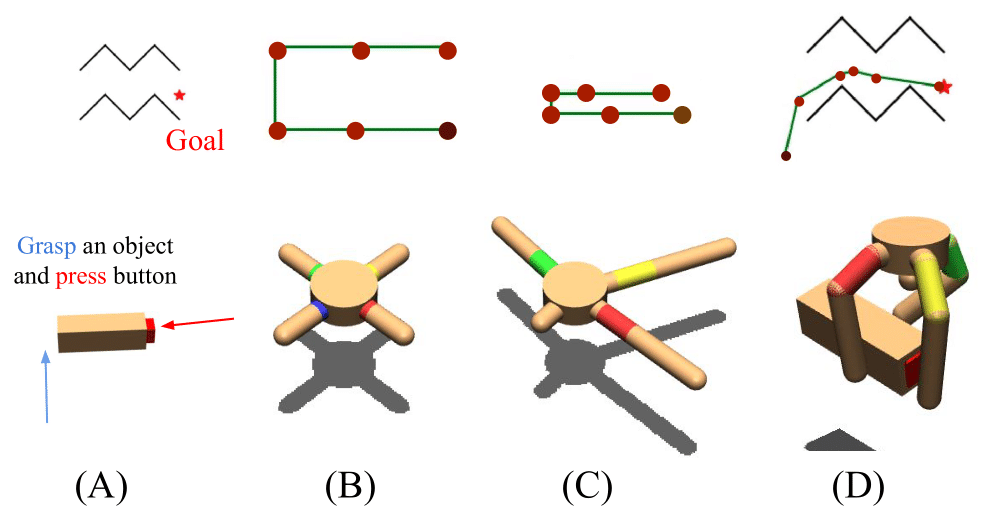

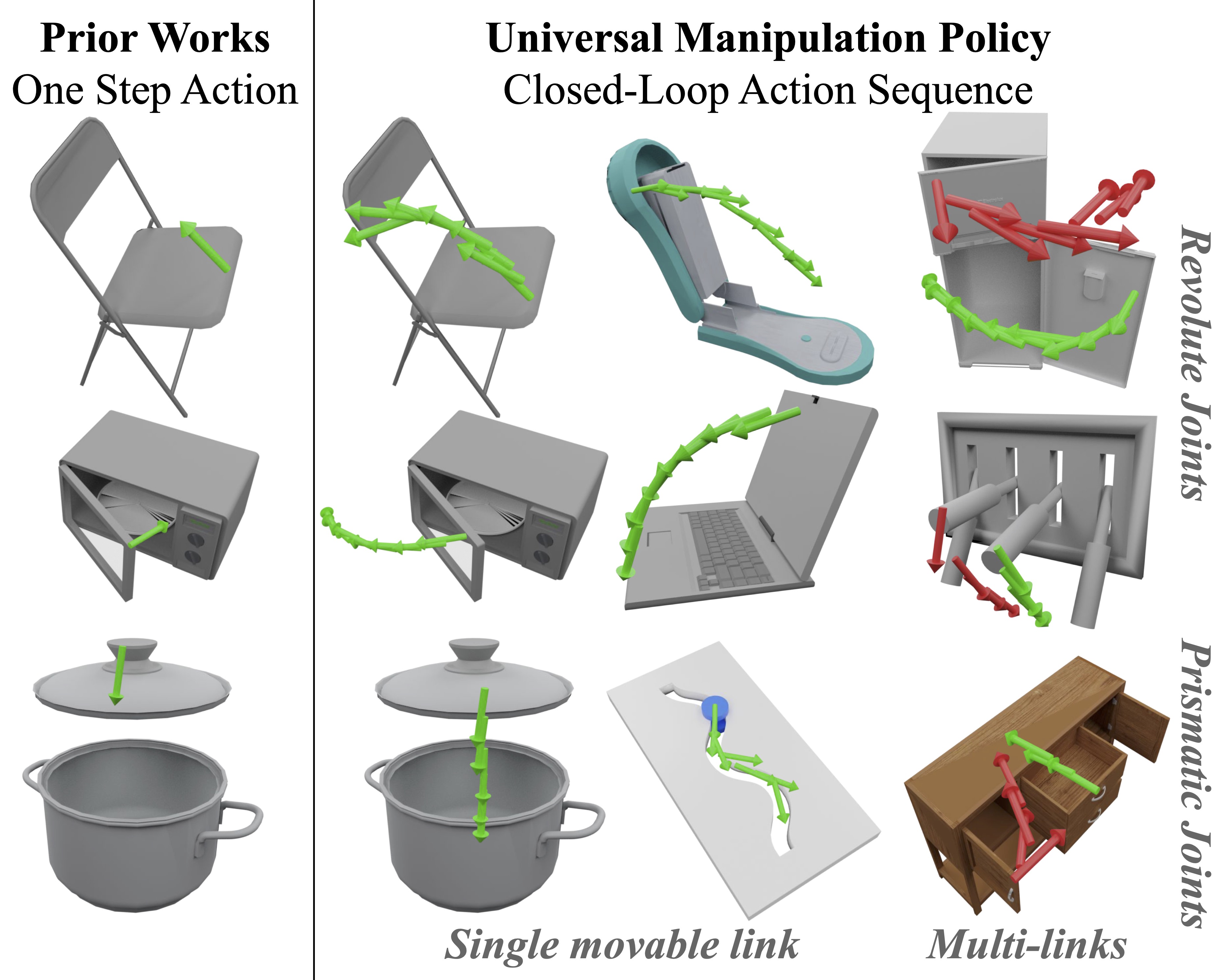

Siddharth Singi*,

Zhanpeng He*,

Alvin Pan,

Sandip Patel,

Gunnar A. Sigurdsson,

Robinson Piramuthu,

Shuran Song,

Matei Ciocarlie

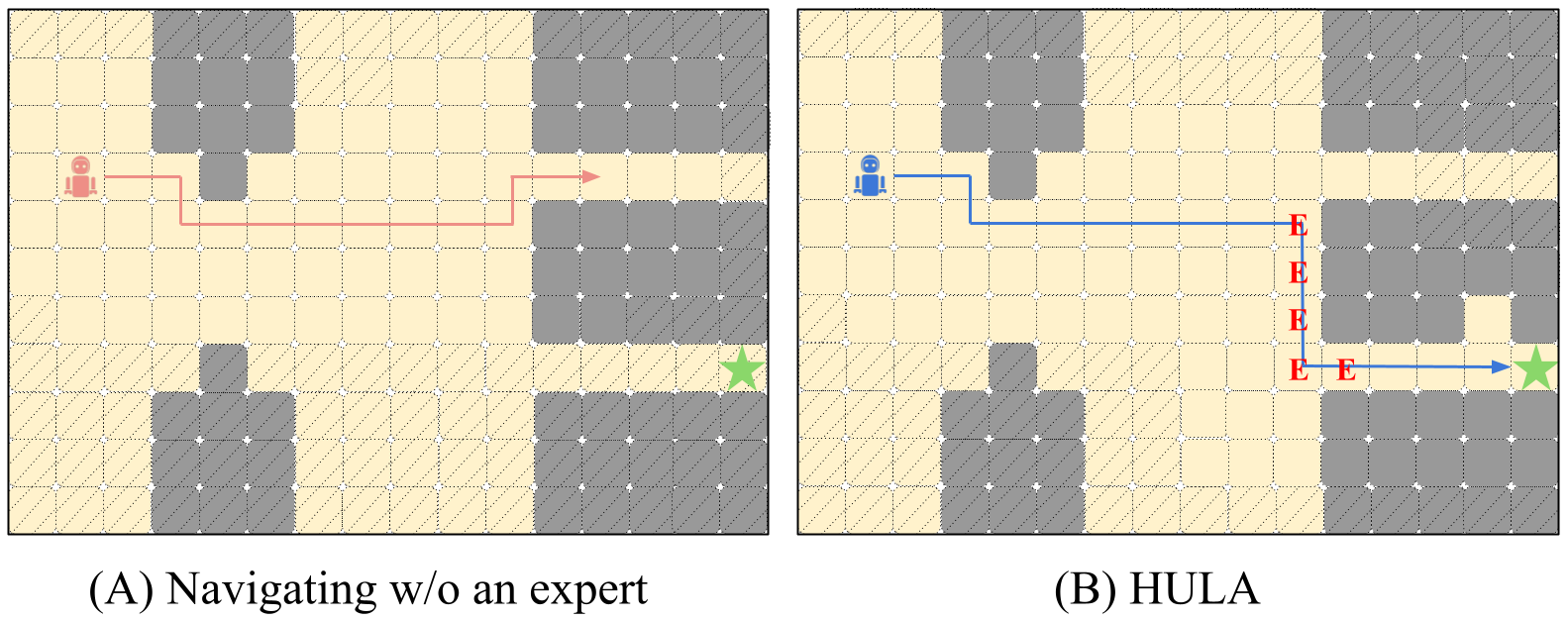

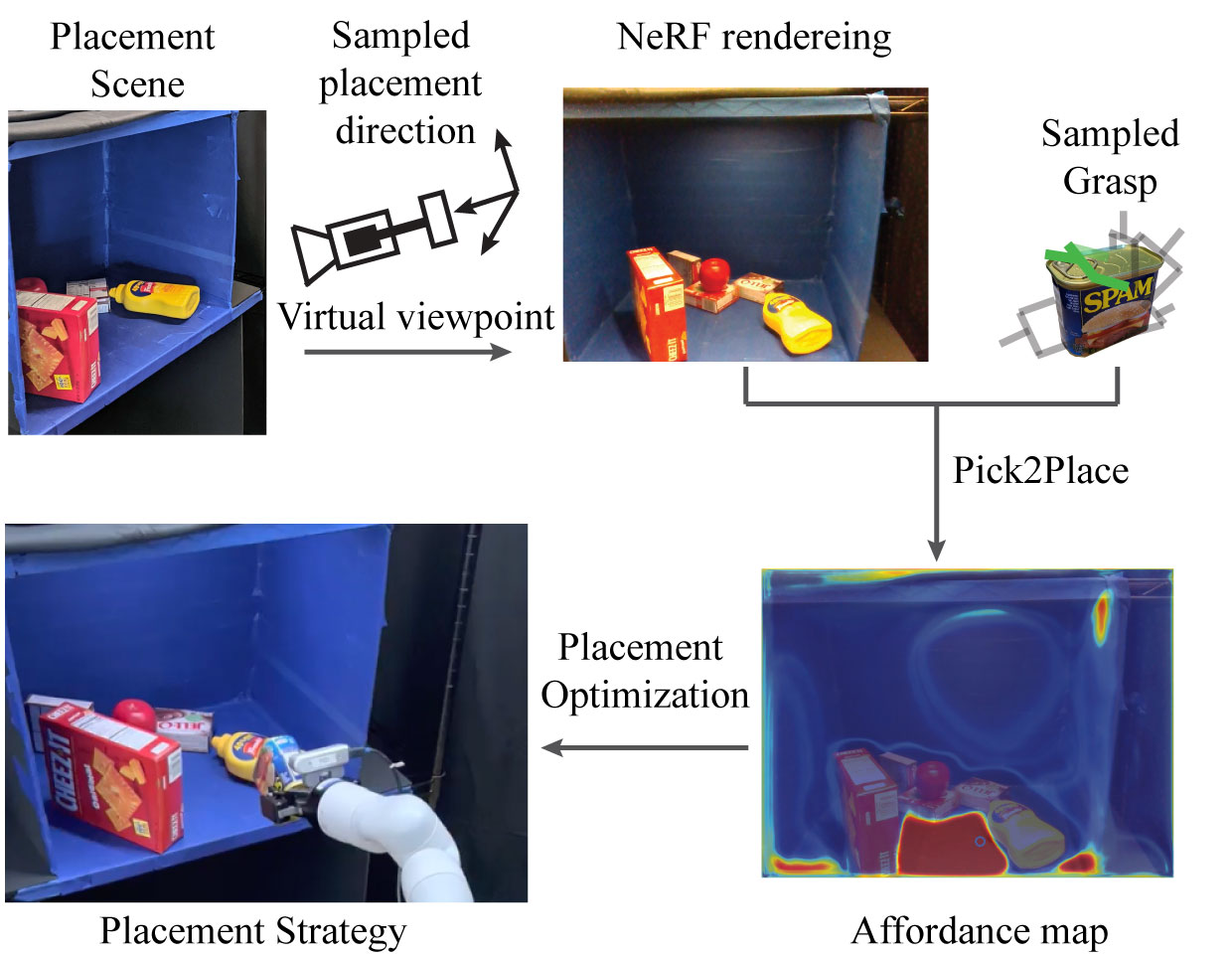

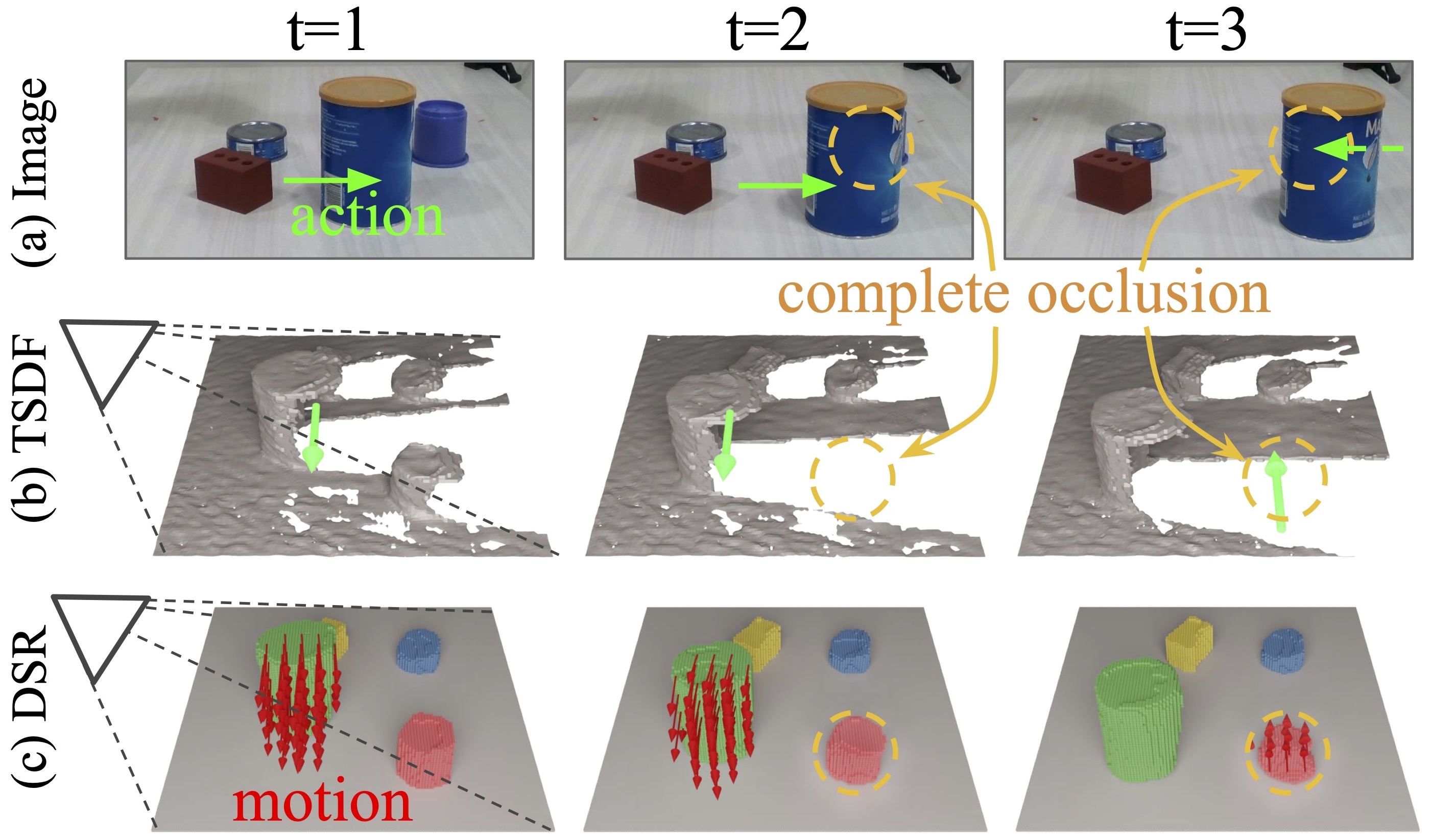

Zhanpeng He,

Nikhil Chavan-Dafle,

Jinwook Huh,

Shuran Song,

Volkan Isler

Software

I was a member of rlworkgroup and took part in development of several robot-learning-related open-source projects.

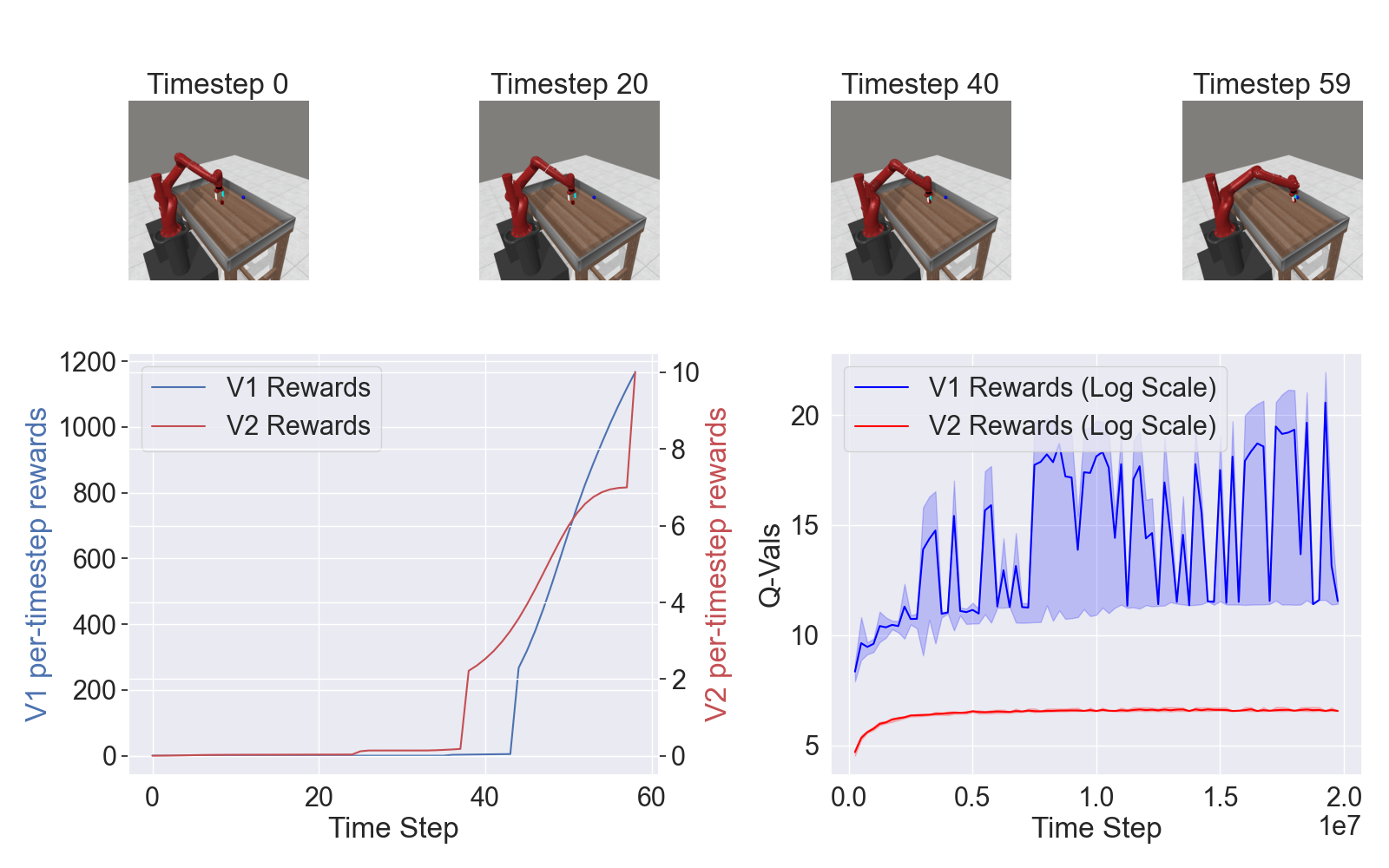

- Garage: A toolkit for reproducible reinforcement learning research.

- Dowel: A little logger for machine learning research.

- Meta-World: A collection of environments for benchmarking meta-learning and multi-task reinforcement learning algorithms.